Differentiated strategies for governing AI: towards cooperation or conflict?

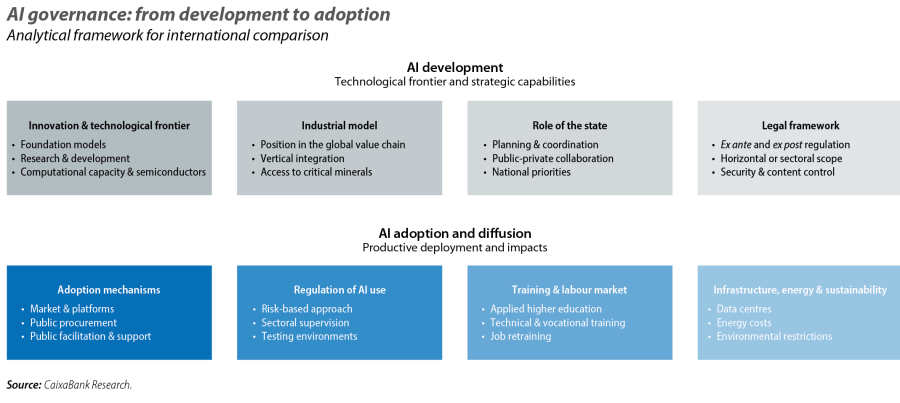

Generative artificial intelligence (AI) is a critical area of economic and strategic competition among the major powers, and its development depends both on the dynamism of the private sector and on state action. Together, they shape the scope and effects of a technology whose complex ecosystem spans innovation and its monetisation, positioning in the value chain, its diffusion and adoption, and the management of its externalities. This article reviews – from a geoeconomic perspective – the strategies adopted by the US, China and the EU in key areas such as regulation, the role of the state in the industrial model, public support instruments, and cross-cutting policies such as professional training and sustainability. We conclude with a reflection on the future interaction of these governance models and the potential areas of friction and cooperation that may arise.

The US seeks to shape the technological frontier

The potential of AI lies in the complexity, speed and reliability with which it performs tasks. Its development relies on a combination of cutting-edge knowledge for the design of language models, computers equipped with high-capacity processing chips, and a robust architecture – both physical (data centres) and digital (cloud infrastructure) – for information storage and model training.

In this field, the US has consolidated its position at the global AI frontier thanks to its human capital, technological capabilities and a favourable business environment.1 It boasts an innovative ecosystem based on elite universities and a concentration of STEM and international research talent. It also benefits from public support as an early incubator, led by both civilian agencies (NSF) and defence agencies (DARPA), and from a business cluster with large tech firms that are embedded into the economy’s industrial base and have both financial muscle and an appetite for risk. Added to this are favourable tax and regulatory frameworks, with minimal intervention during the development phase, still lacking a comprehensive federal law2 and with a predominance of ex post intervention. The Trump administration's action plan has placed even greater focus on the technological frontier with a marked geostrategic emphasis,3 setting an explicit goal for US semiconductors, models and applications to be hegemonic on a global scale and become the new «gold standard».4

In contrast, state planning, coordination and guidance are the foundation of the Chinese model. While it is private companies that have capitalised on the exponential improvement of technological capabilities in the last decade, AI research and development are aligned with national priorities. Whereas the US seeks to define the technological frontier, China prioritises key links in the global industrial value chain,5 scale, technological self-sufficiency and security. Subsidies, tax incentives and public financing mechanisms, both at the central and provincial levels, are all contributing to this. This approach is complemented by the preventive control of socially sensitive content, including registration and assessment requirements ex ante for recommendation systems in digital applications.6 Recent regulation reinforces the limits on public dissemination of information while maintaining greater relative freedom in the research, development and training of models for productive or strategic uses.7

The EU, for its part, is seeking to establish a common governance framework to overcome the prevalence of national frameworks in AI development. The main strength of the European innovative ecosystem is its scientific and research base, with universities and centres of excellence. However, it suffers from insufficient supranational coordination and limited prioritisation of its framework programmes, such as Horizon Europe. The financial system is less geared towards risk-taking and, together with the fragmentation of the internal market, hinders the transfer and monetisation of knowledge, as well as the scaling-up of the technology.8 To protect its citizens, the EU’s regulatory framework prioritises ex ante regulation of the uses of AI based on risk,9 which can shift its development away from the cutting edge of innovation. Added to this is a high external dependency on advanced semiconductors and foundation models, which the EU is attempting to mitigate through open strategic autonomy and diversification of economic partners.10

- 1

According to estimates based on data from Epoch AI, the US accounts for two-thirds of the world's AI-related computational capacity, followed by China with around 20%, while the EU accounts for just 5%.

- 2

The only general AI law in effect in the US is the one passed by the state of Colorado in 2024.

- 3

White House (2025), «America's AI Action Plan».

- 4

It thus shifts the previous focus on the coordination of the innovative ecosystem and industrial resilience as outlined in the National Artificial Intelligence Initiative Act (2020) and the CHIPS and Science Act (2022).

- 5

See the Focus «China’s alchemy: how it transforms critical minerals into global power» in the MR01/2026.

- 6

Cyberspace Administration of China, CAC (2021), «Algorithm Recommendation Provisions». CAC (2023), «Interim Measures for the Management of Generative AI Services», CAC (2023), «Deep Synthesis Provisions» and CAC (2025), «AI-generated Content Labeling Rules».

- 7

CAC (2023), «Interim Measures for the Management of Generative AI Services», CAC (2023), «Deep Synthesis Provisions» and CAC (2025), «AI-generated Content Labeling Rules».

- 8

M. Draghi (2024), «The Future of European Competitiveness».

- 9

EU (2024), Artificial Intelligence Act.

- 10

The «AI Continent» Action Plan, presented by the Commission in 2025, extends the strategic public intervention approach applied to semiconductors in the European Chips Act (2023), complemented by the objectives of the Critical Raw Materials Act (2024) to ensure a secure and sustainable supply of critical raw materials.

China prioritises adoption and diffusion with productive uses

Beyond technological development, the economic and social impact of AI largely depends on how its adoption and diffusion are governed. In these areas, the approach adopted by the main players also varies widely.

In the US, it is private entrepreneurial initiative and competition that is taking the lead, with the major tech platforms and software providers acting as natural channels for scaling up towards businesses and consumers. State action focuses on removing barriers, providing critical infrastructure, and using public procurement – especially in defence and security – as a driving mechanism for adoption. Regulation is mostly ex post, guided by voluntary standards of cross-sector application defined by a federal scientific agency (NIST), along with sectoral oversight in sensitive areas, such as the protection of healthcare patients and financial services clients. Based on this logic of minimal intervention, the state acts as a facilitator and largely leaves the management of cross-cutting areas to the market, although the new national regulatory framework includes recommendations for professional retraining and to limit the impact of the expansion of data centres on electricity costs.11

In the Chinese model, as in the development phase, the public sector plays a key role. The state acts as the coordinator of the ecosystem, ex ante regulator, financier and demander, channelling substantial public investment through large state-owned enterprises and into strategic sectors such as advanced industry, logistics, energy and security. The planned schedule includes sectoral and territorial penetration objectives at different time horizons, with a roadmap that is due to culminate in a fully «smart» economy and society by 2035.12 To this end, vertical programmes for the transformation of the industrial value chain have been defined,13 with controlled competitive environments that facilitate the assessment of scalability without transferring risks to the wider system, such as regulatory sandboxes and pilot zones. This approach is accompanied by the integration of AI into higher education and technical and professional training programmes. Energy and infrastructure planning forms part of the deployment strategy, while sustainability is subordinated to national economic security priorities.

Unlike in the US, where the diffusion of AI relies on large private platforms, and in China, where the state acts as a centralised demander, in the EU the adoption and diffusion of AI is primarily structured through an approach based on regulation and public support. The fragmentation of the internal market and ex ante regulatory obligations for high-risk uses limit the pace and scale of adoption.14 Public-sector action combines regulation with EU-wide instruments – such as the Apply AI Strategy – and practical support – such as hubs and testing environments – aimed at facilitating sectoral implementation and reducing legal uncertainty.15 This approach tends to increase adoption costs and slow down dissemination, especially among SMEs, where fixed costs and skills deficits weigh more heavily. Added to this are structural limiting factors, such as high energy costs and environmental commitments associated with the deployment of compute-intensive infrastructures.16

- 11

White House (2026), «Artificial Intelligence: national policy framework».

- 12

These objectives are defined by the work programme of the AI Plus initiative launched in 2024 by the State Council, similar to the Internet Plus initiative of 2015.

- 13

e.g. the AI+ Manufacturing initiative launched in 2025 under the umbrella of AI Plus.

- 14

M. Draghi, op. cit.

- 15

The AI Act (2024) establishes support mechanisms for AI deployment to facilitate regulatory compliance in high-risk uses, while the Apply AI Strategy (2025) integrates them into an action plan aimed at accelerating adoption, especially among SMEs and government entities.

- 16

IEA (2025), «Energy and AI».

The EU seeks its place in AI geopolitics

The rivalry between the US and China in the AI era is unfolding amid significant strategic uncertainty.17 It is unclear whether the advantage of being at the technological frontier will generate persistent revenue streams that are difficult to replicate or whether competition will shift towards dissemination, deployment, and the ability to scale up applications in key sectors. In both scenarios, the power associated with AI will largely depend on the control of key assets – advanced chips, computing capacity, energy, talent, and industrial integration – so betting on a single trajectory could prove costly if the evolution of the technology diverges from the initial assumptions.

This framework tends to place middle powers in a position of technological dependence.18 The concentration of talent, investment, and computing capacity in the US and China limits the scope of influence over the direction of technological change and amplifies the economic and social adjustment costs associated with AI. For the EU, the risk of falling behind reinforces the debate surrounding the balance between regulation, competitiveness and scale. In particular, the Draghi report's diagnosis on internal market frictions and the difficulty of scaling up innovation aligns with the recent shift towards approaches based on simplification and regulatory proportionality. The goal of this shift is to prevent legal certainty from ultimately penalising adoption and scale-up, especially among SMEs.19

Nevertheless, AI governance is not necessarily reduced to bloc logic. Even in a context of strategic rivalry, recent multilateral initiatives show areas for coordinating principles and practices. Thus, the focus on security and regulation at the summits in London (2023) and Seoul (2024) has expanded to encompass a more comprehensive agenda of innovation, digital skills, labour impact, and sustainability in Paris (2025), and the emphasis on capacity gaps between advanced and emerging economies in New Delhi (2026). In this vein, the framework promoted at the United Nations suggests a more inclusive and distributed global architecture based on common principles and mechanisms that are complementary to national and regional strategies.20 For the EU, the challenge will be translating this cooperative agenda into real adoption and scale-up capabilities.

- 17

Foreign Affairs (2026), «Geopolitics in the Age of Artificial Intelligence: Strategy and Power in an Uncertain AI Future».

- 18

Foreign Affairs (2026), «The AI Divide: How U.S.-Chinese Competition Could Leave Most Countries Behind».

- 19

The European Commission’s proposal set out in the Digital Omnibus package of November 2025 – currently being negotiated among co-legislators – introduces a more pragmatic tone in the regulatory approach, with adjustments aimed at reducing the burden and facilitating technological adoption without altering protection objectives.

- 20

United Nations (2024), «Governing AI for Humanity».